Generative AI in Creative Fields in 2024

Creativity and innovation are being dramatically reshaped by the incredible advancements in generative AI. The integration of artificial intelligence in creative fields is now a vivid reality, transforming how art, music, literature, and design are conceived, created, and consumed. This evolution is not just altering the toolkit available to creators but is also redefining the boundaries of creativity itself.

In recent years, the emergence of AI as a partner in the creative process has been both exhilarating and thought-provoking. From AI-generated art that rivals human creativity to algorithmically composed music that stirs the soul, the capabilities of generative AI are pushing us to reconsider what it means to be a creator in the digital age.

In this article, we’ll explore the current state of generative AI in creative fields in 2024, highlighting groundbreaking projects, the evolving role of the artist, and the societal implications of this technological leap forward.

What is Generative AI?

Generative AI refers to algorithms and models that can generate new content, be it text, images, music, or even ideas, that haven’t been explicitly programmed into them. Unlike traditional AI, which might predict the next word in a sentence based on its training, generative AI can create entire paragraphs, artwork, or melodies from scratch, often with minimal input. It’s like having a muse that not only inspires but also participates in the creative process, offering outputs that can sometimes astonish even the most seasoned creators.

How does generative AI work?

The magic behind generative AI lies in its intricate networks and algorithms, particularly in models known as Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs). These models are trained on vast datasets, learning patterns, styles, and structures inherent in the data.

GANs, for instance, work through a fascinating process of push and pull between two neural networks—the generator, which creates new content, and the discriminator, which evaluates this content against the training data. This iterative competition drives the generator to produce increasingly sophisticated and realistic outputs.

How Generative AI Is Changing Creative Work?

The impact of generative AI on creative work is profound and multifaceted. For artists and creators, it opens up new vistas of expression and experimentation. Lots of artists collaborate with AI to produce stunning visual art that merges human emotion with algorithmic precision, creating pieces that resonate on a deeply personal level yet carry an unmistakably unique signature.

In writing, generative AI tools have become collaborators, helping authors overcome writer’s block, generate ideas, or even co-write stories. This partnership between humans and machines is redefining the very act of storytelling, making it a more inclusive and expansive endeavor.

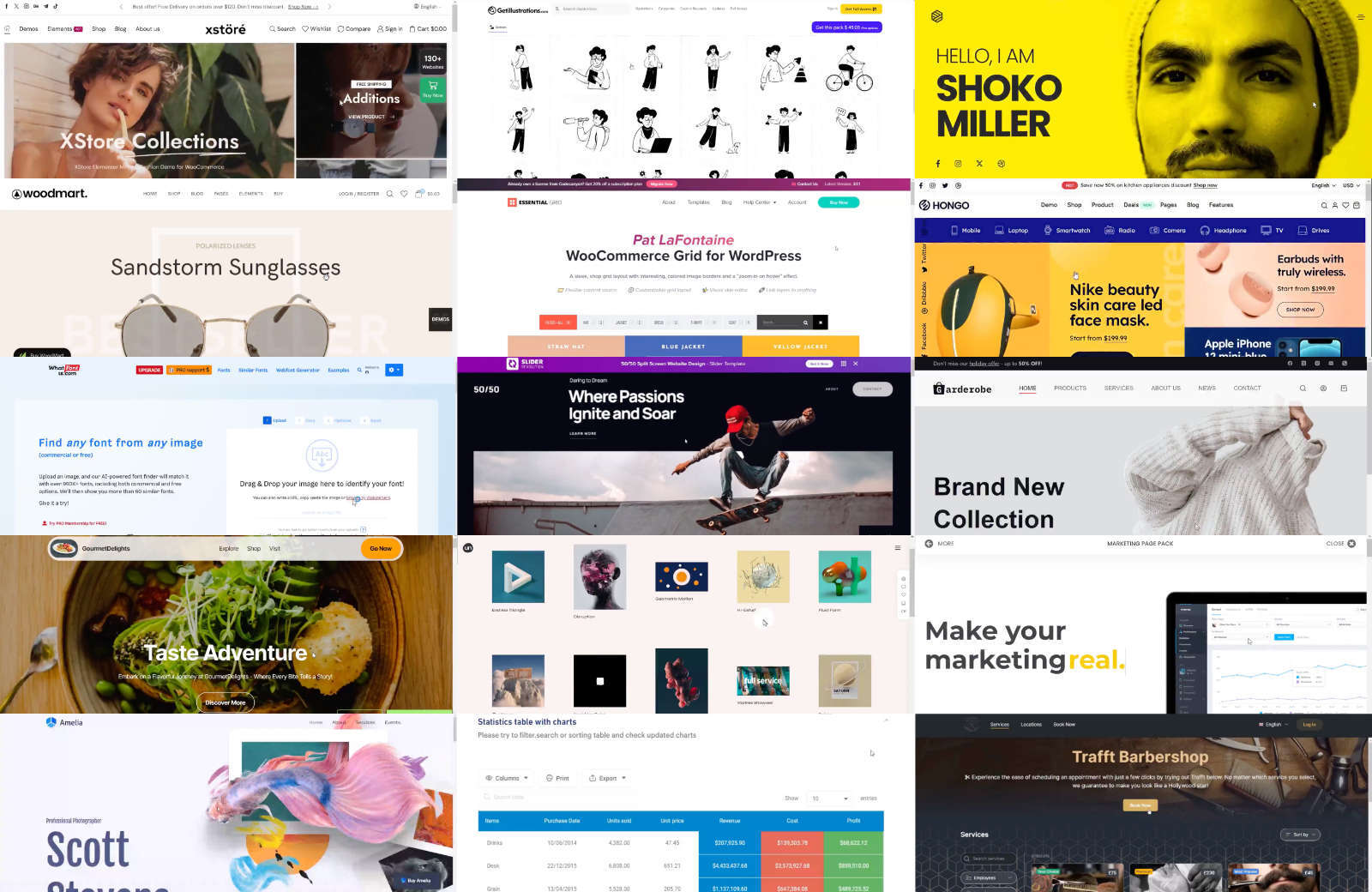

Moreover, generative AI is democratizing creativity, making tools and techniques available to a broader audience. Individuals without formal training in art or music can now explore their creative impulses, guided and aided by AI. This democratization is not just about making creation easier; it’s about unlocking the creative potential within each of us, challenging the notion that creativity belongs only to the traditionally trained or innately talented.

Applications of Generative AI

Every application, from art to scientific research, underscores the transformative power of Generative AI technology. It’s a reminder of how generative AI is not just a tool for creating and discovering but a lens through which we can glimpse the future of human creativity and innovation. Here are the most common areas of application:

1. Art and Design

One of the most visually striking applications of generative AI lies in the fields of art and design. Artists and designers are leveraging these tools to create stunning visuals, patterns, and artworks that might take a human counterpart weeks or months to produce.

It’s not just about speed; it’s about introducing a new form of collaboration between human and machine intelligence. This synergy enables the creation of pieces that are not only beautiful but also carry a depth of complexity and novelty that’s genuinely breathtaking.

2. Music and Sound Production

In music, generative AI is hitting all the right notes, transforming the way melodies are composed and produced. As someone who dabbles in music, witnessing AI algorithms compose pieces that resonate with human emotions is utterly fascinating.

These AI systems can generate music in various styles and genres, offering artists and producers a bottomless well of inspiration and a new set of tools for experimentation and expression.

3. Writing and Content Creation

Generative AI has also made significant strides in writing and content creation. From drafting articles to composing poetry, AI tools are now capable of producing written content that’s increasingly difficult to distinguish from that written by humans.

This doesn’t mean AI is replacing human writers, but rather, it’s offering a new way to overcome writer’s block, generate ideas, or even draft initial content that writers can then refine and imbue with their unique voice and perspective.

4. Gaming and Interactive Media

In the gaming world, generative AI is revolutionizing how environments, narratives, and characters are developed. Game designers are using AI to create dynamic, evolving worlds that respond to player actions in ways previously unimaginable.

This not only enhances the gaming experience but also opens up new avenues for storytelling and player engagement.

5. Scientific Research and Innovation

Beyond the realms of creativity and entertainment, generative AI is playing a pivotal role in scientific research and innovation. It’s being used to model complex systems, simulate experiments, and predict outcomes, accelerating the pace of discovery in fields ranging from pharmaceuticals to environmental science.

The ability of AI to sift through vast datasets and identify patterns and connections that might elude human researchers is a game-changer, paving the way for breakthroughs that could reshape our world.

Benefits and Challenges of Generative AI

The potential of generative AI to enrich our lives and solve complex problems is immense, but it requires a concerted effort to harness its power responsibly. As we move forward, our goal should be to create a future where generative AI serves as a force for good, amplifying human creativity and ingenuity while safeguarding against its potential pitfalls.

Benefits of Generative AI

Unleashing Creativity

One of the most exhilarating benefits of generative AI is its ability to unlock new dimensions of creativity. It serves as a muse and a collaborator, offering creators a palette of possibilities that were previously unimaginable. This technology empowers artists, musicians, writers, and designers to push the boundaries of their craft, blending human intuition with AI’s capability to generate novel ideas and patterns.

Accelerating Innovation

Generative AI is a catalyst for innovation, dramatically accelerating the process of ideation, experimentation, and discovery. In fields like drug development and environmental science, it can sift through vast datasets to identify patterns and predict outcomes, leading to breakthroughs at a pace that would be impossible for humans alone. This acceleration is not just about speed; it’s about the capacity to explore a broader set of possibilities and solutions to complex problems.

Enhancing Productivity

The efficiency and productivity gains offered by generative AI are undeniable. Whether it’s drafting content, creating prototypes, or generating code, AI tools can perform tasks in seconds that might take humans hours or days. This efficiency allows creative and professional individuals to focus on the more nuanced aspects of their work, where human insight and empathy are irreplaceable.

Challenges of Generative AI

Ethical and Moral Considerations

The rise of generative AI has ignited a flurry of ethical and moral questions. Issues of copyright, authenticity, and the potential for creating misleading or harmful content are at the forefront of the discourse. Navigating these waters requires a thoughtful approach, balancing the benefits of generative AI with the need to protect intellectual property and prevent misuse.

Impact on Employment and Skills

There’s a palpable concern about the impact of generative AI on employment, particularly in creative fields. As AI becomes more capable, there’s fear that it might replace human roles. However, my perspective is more optimistic; I see generative AI as augmenting human capabilities rather than replacing them. The challenge lies in adapting our skills and education systems to prepare for a future where AI is a tool, not a competitor.

Technical and Security Issues

With the adoption of generative AI, technical and security challenges abound. Ensuring the integrity of the data used to train these models, protecting against biases, and safeguarding against malicious use are critical issues that must be addressed. These challenges underscore the importance of robust, transparent AI governance and the development of secure, ethical AI systems.

Conclusion

This 2024, it’s evident that we’re at the dawn of a transformative era, where the convergence of artificial intelligence and human ingenuity is unlocking unprecedented opportunities for creativity and expression. The integration of generative AI into the arts has not only revolutionized traditional practices but also democratized creativity, making it more accessible to diverse voices worldwide.

Despite the challenges and ethical considerations that accompany its adoption, the potential for positive impact is immense. As we continue to explore this dynamic technology, the collaboration between creators and AI promises to redefine our understanding of creativity, fostering a future where technology amplifies human potential, paving the way for innovations that we have yet to imagine.

Featured image by ilgmyzin on Unsplash

The post Generative AI in Creative Fields in 2024 appeared first on noupe.